Paul Hammant's Blog: JBehave and Servirtium

Working with JBehave lead, Mauro Talevi, and HTTP4K’s lead, David Denton we now have a demo of Servirtium and JBehave together.

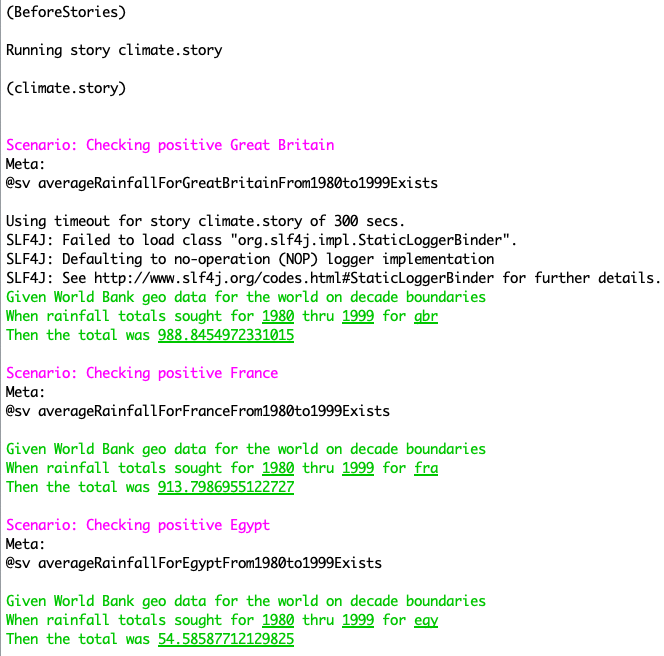

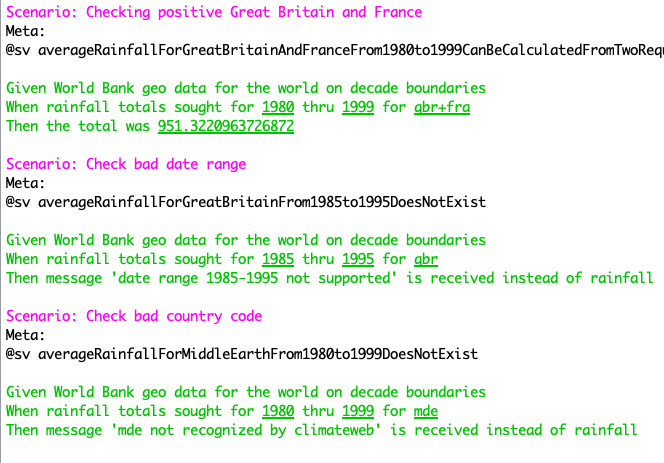

We’re redoing our trusty WorldBank “climate api” tests in JBehave in Java using HTTP4K’s version of Servirtium (written Kotlin for general JVM use). The scenarios:

Timings:

- Direct mode (no Servirtium involved) takes 9.3 seconds on my older MacBookAir. That’s via

time mvn test, and hits WorldBank.com’s web-APIs. - Servirtium used, with the same tests/scenarios, in record mode: 9.6 seconds. That’s

time mvn test -P recordand also hits those web-APIs - Servirtium used, with the same tests/scenarios, in playback mode: 7.4 seconds. On the command line that is

time mvn test -P replayand can pass just fine disconnected from the internet.

The BDD Story is here. the recorded HTTP interactions (Servirtix / Servirtese) are here. You’ll see we’re using a meta-property so JBehave can tell Servirtium which recording to use.

For the uninitiated, we have a contrived Java lib that uses WorldBank’s web-APIs. We want to isolate our dev team from any flakiness of that online service. We note in reality this particular one is not flaky. In reality that’d be a partner company, who may not like is hitting their sandbox or live service so often (all the devs, all the CI bots). That was a reality for me ten years ago working at an airline that was doing many hundreds of full-stack test flight bookings an hour (at peak): One of the depended-on partner org’s backend service was being DOS’d by our devs and Jenkins. We got at least one “please don’t” phone call about that. So towards that, we record and playback. Day to day, devs would use replay. Nightly or weekly - a Jenkins (etc) non-CI job - we confirm the recording has not changed.

We also use it as an early warning system that the vendor is experimenting with changes in the sandbox, where we otherwise missing the change in the CHANGELOG email/notification. Or they accidentally forgot that email/notification.

BDD’s value

I generally think that BDD Acceptance Criteria Pay For Themselves Multiple Times where it’s suggested you don’t need to actually automate BDD scenarios (Cucumber would call these Cuckes) to get value.

In this case, we are automating, but we should critique the scenarios. The given line is the same for each, carries a lot f detail but doesn’t act on that. “Decade boundaries” for one. That alludes to the fact that WorldBank’s web API only works for from 1980-89, 1990-99, etc. Or multi-decade ranges. Also the “message” or “total was” is icky and needs further thinking.

Then to the value of BDD, we can see the decade boundary limit is artificial when we also look at the markdown recordings. Sure, World Bank’s APIs gives us data in ten-year chunks but our Java lib can easily deliver 1985 to 1995 if needed. This would be solved by simple looping/filtering in Java (or whatever your language choice is) and calculating the results. The recorded markdown would be two interactions in series as averageRainfallForGreatBritainAndFranceFrom1980to1999CanBeCalculatedFromTwoRequests.md already is, but some out of range years would be excluded from the totals to be returned to the invoker. The point being that BDD makes some classes of design imperfections easier to see.