Paul Hammant's Blog: Legacy Java Applications: Strangulation inside Tomcat

Legacy Java Servlet apps

Since its launch in 1997, ‘Java Servlets’ was a mainstream choice for enterprise application development. Sun quickly added JSP, Tag Libraries and JSF. The open source community made many web frameworks to work. Struts 1.0 was the first web framework to hit big in 2000, and maybe the earliest Java web-framework you will still encounter in the enterprise today. If it is still around in your deployed applications, it is definitely legacy as the last release was in 2008, and it was formally end of life in April of this year. Someone is always going to say rewrite, and sometimes rewrites pay off.

Contributions to problems for large enterprise systems:

Classic Singletons versus Dependency Injection

While it was known in smaller circles that solutions leaning on Singletons (the design pattern not the Spring etc idiom) would slowly become unmaintainable, the vast majority of professional developers in 2000 did not at that time know it. Dependency Injection hit it big in 2004 with Martin’s blog entry, and in 2006 Spring Framework’s XML way of decomposing Dependency Injection web applications became the main way. In itself, that had problems, but let us mark 2006 as the turning point for the majority.

Since then a debate continues as to whether a Dependency Injection container (I co-created one too - PicoContainer) is needed, or whether the semantics of injectable classes/components are enough on their own. Also since then graduates arrive in the programming field who have not heard of Dependency Injection (or its encompassing principal - Inversion of Control), resetting the clock incrementally. There’s also the rise of dynamic languages (Python, Ruby) and their non-container reality (though the class idioms of DI still apply according to Alex Martelli and Jim Weirich).

Other causes

The “static state” problems of singletons aside, Java Servlet applications in modern age can also scarred by:

- Too many servlet filters - each new team puts one in front of all others

- Over use of ThreadLocal to perform complex operations

- A service locator in the mix, but with no plans to move further towards it, or away from it

- Second/Third component frameworks in the mix. Say the team started with Struts1, then put Wicket in too (for different pages)

What is Strangulation again?

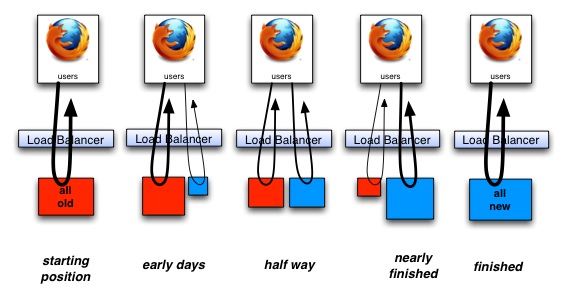

Remember, strangulation means safe incremental migration from bad to good, while simultaneously adding new functionality, and putting a part old & part new application live iteratively.

It is different to a plain rejuvenation of a legacy codebase, in that you’ve determined that the end goals of rejuvenation are not attainable, or the starting point is too far removed.

See my previous blog entries, too

- Legacy App Rejuvenation

- A singleton Escape Plan

- as well as yesterday’s Legacy Application Strangulation : Case Studies

- and Martin’s definitive article: StranglerApplication.

Your new problem

After you’ve decided to strangle rather than rewrite, your new problem is that you don’t really want to add any more code to the existing WAR-file application because it’s a house of cards, and could collapse with any subtle coding mistake. It’s brittle and perhaps there are few unit tests protecting it.

Web App Strangulation generally

As implemented hundreds of times in the industry, web-app strangulation involves having a proxy run in front of the old and the new application, and via mappings route incoming HTTP requests to either the old or new application. Over a period of time you’d add more code (and functionality) to the new app, and take more away from the old one, with corresponding changes in the routing proxy. That proxy could be the big-iron programmable load balancer like F5. It could also be an open source piece like HAProxy. Those two are the high-scale ways of doing it. Closer to the application code, you could be just programming routing in an Apache layer.

The pure-Java solution

This is not necessarily better, but if you are living by other deployment stack and scaling considerations, coding this safely inside the servlet container (Tomcat, Jetty, etc) is possible. That’s what I’m going to outline.

A second WAR file.

WAR files are the drop-in self-contained deployment archive for servlet containers. By convention one called ROOT.war will deploy without a context, and ones named in any other way lend their file name to the context in question. At least by default that’s true for most web-app containers.

For this solution, we’re going to have a new WAR file application that’s going to occupy the old one’s place in the directory structure for the site in question. If the old app were found at example.com/*, after implementation the new app will be instead. What was the old app will be moved away from that root context. That’s example.com/oldApp/* in this example.

The initial version of this delegate all HTTP requests to the equivalent URL on the old application. That’s as simple as prepending oldApp/ to the path and a executing a few lines of Servlet magic (see below for how). One by one, end-points are rewritten in the new war file, and obsoleted in the old one. It could be that code migrates too (as it’s rejuvenated) but whether that happens or not, you should have a high level of tests according to the test pyramid to guarantee quality. Of those, the functional tests can step into the old application and back again if it makes sense.

The old app also needs a new servlet-filter front-running it to prevent the end-points being visible from the end-user TCP/IP addresses. You may still want the end-points available internally for debugging purposes.

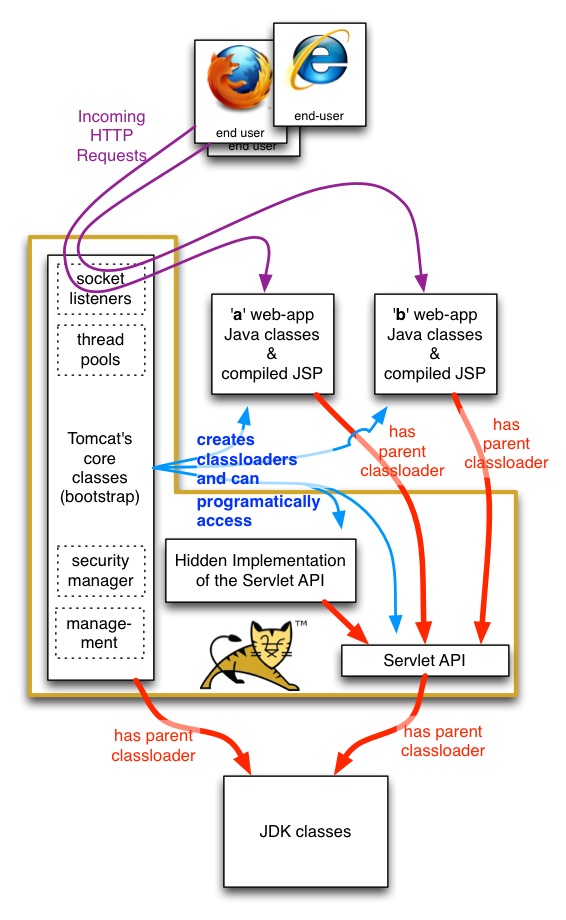

One War-file invoking endpoints in another

If you have two web applications ‘a’ and ‘b’ (a.war & b.war), Servlets or Filters in ‘a’ can invoke an end-point in ‘b’ like so:

HttpServletResponseBuffer tmpResponse = new HttpServletResponseBuffer();

tmpResponse.copyAFewThingsFromTheUpStreamResponse(response);

HttpServletRequest servletRequest = (HttpServletRequest) request;

ServletContext bContext = servletRequest.getSession().getServletContext().getContext("/b");

RequestDispatcher endPoint = bContext.getRequestDispatcher("/aStaticOrDynamicResourceOrEndpoint");

MyHttpServletResponse newResponse = new MyHttpServletResponse((HttpServletResponse) response);

endPoint.include(request, tmpResponse); // or .forward(..)

// unpack payload from tmpResponse, and do things to your stored response.

The complexity is that you can only get back a stream of characters/bytes from ‘b’. You’ll have to process those streams as if they’d been acquired by TCP/IP. That includes parsing if you’re talking about JSON or XML. That is the deliberate design of the servlet container, in that servlet apps (different war files) are in different class-loaders so that they can’t interfere with each other programmatically - the same classic singleton in two war files is actually two totally separate instances. Here’s a view of the classloaders (red lines show parent and grandparent classloaders):

They can still interfere with each other in terms of CPU availability as the JVM is not able to protect one web-app from another’s CPU or socket busyness. That’s Sun’s implementation shortcoming, that Oracle can maybe remedy in the future.

There’s some extra magic required in to allow ‘a’ to invoke ‘b’, that’s not allowed by default. At least for Tomcat, context.xml needs to have an attribute set crossContext="true". Here is a Perl one-liner for that, as that’s outside the war file:

perl -p -i.bak -e 's/<Context>/<Context crossContext=\"true\">/g' /path/to/tomcat_install/conf/context.xml

Threading

As at least Tomcat and Jetty have implemented it, no new threads are used when ‘a’ invokes something in ‘b’. This is important as you otherwise worry about a drop in capacity for the part strangled “application” as you put it to production. If the containers were to silently use new thread during include(..) or forward(..), you would see capacity approximately halve assuming you were more or less fully utilized for the machine (or virtual machine).

Shared aspects.

There are lots of things that come down from the browser with a HTTP request. The URL is an obvious one, as is the payload of the request (POSTs have post-fields). There’s also cookies, and some may be pertinent to your new app, with others for the old app. You could choose to separate those if it matters, or let both applications see them.

There’s also a JSESSIONID for Java Servlet applications, used to identify who a request is on behalf of. Sharing that for two apps (old and new) serving the same end-user request is perfectly OK, and like the threading section above means a more streamlined strangulation.

Further reading.

Sitemesh has been using context.getRequestDispatcher(..).forward(..) for 15 years. A legendary framework from legendary developers (Joe Walnes, Mike Cannon-Brookes). Here’s the critical class. You will want to copy sections of this codebase (particularly the tricks they are doing to ‘request’ and ‘response’) if you are going in the direction I outline.

I’ve made an example of an ‘a’ and ‘b’ web-app scenario. It is on Github: github.com/paul-hammant/servletDispatcherTest and there are instructions there as to how to deploy it yourself for a tryout. There are servlets/filters and static resources. Shown is accessing those things directly, for via in implicit getContext('/b').getRequestDispatcher(..).include(..) route. I’ve cheated and used mime types of “text/plain” to make the code smaller, but it would be the same for HTML, XML, GIFs, JSON, etc. It also goes out of its way to show that one-thread is used for all of a request.

Thanks to

Joe Walnes (of Sitemesh fame) and Mark Thomas (Tomcat mail-list)