Paul Hammant's Blog: Features I would love source control tools to have

Lucas Ward and I published a Source Control Maturity Model (SCMM) article nearly three years ago. I had been thinking of it for a many years (particularly the PVC Dimensions being level -1) and Lucas was a great collaborator to publish it, and crystalize the ideas. It was inspired by the Capability Maturity Model of course.

This article is a follow up. It talks of where I’d like source-control to go, and reevaluates the article Lucas and I previously wrote.

Recap the Feb-2010 blog entry

Summarizing the previously defined levels:

(TODO - renumber from 0)

- No Source Control.

- Systems that remotely hold revisions safely, but really work best if they that remote server isn’t too far away, and staff don’t step on each other’s toes too much in terms of the same source files, while mostly avoiding branching because it is expensive, and everything is slow.

- Faster without atom commits, multi-user mode bearable.

- Faster still + atomic commits, branching capabilities are decent, with merge-point-tracking.

- Faster again, merge-point-tracking better, merge-through-rename rudimentary.

- All history locally available, cheap local branching, merge-through-rename is smooth.

Level -1 was reserved for tool that’s so bad that using nothing at all was preferable.

Things I’d like to see in the next generation of source-control tools

Semantic diff/merge.

(Mar 29, 2013 - The PlasticSCM folks registered semanticmerge.com. One pint of beer owed - and agreed :)

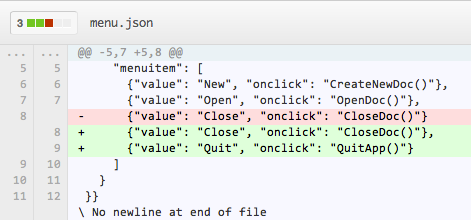

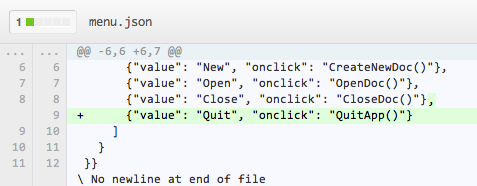

This is alluding to a level 6 on the SCMM. Diffs can be too noisy because the tools can’t give a break to specific languages. Consider tidied JSON. Add one element in a list, is a new line and a changed line (the comma). It does not need to as ignorant as:

Better, perhaps, would be this:

Sure, some of the advanced diffing/merging tools like BeyondCompare, P4Merge, and Kdiff3 do a good job of this visualization, but it takes some effort to hook them into a source-control tool-chain, when the built-in merge technology should be as smart, but without having to prompt as much for human arbitration when there are conflicts.

Lucas says:

“The rise in usage of non statically-typed languages makes this even more imperative. In java for example, it’s likely that a bad merge would be detected by at least a static check, even though it leaves a host of other possible issues. In javascript you might never know unless you have very good testing. And testing of Javascript applications is still not easy, so it’s a good example. I can’t count the number of times I’ve been screwed by an automated tool manipulating JS code”.

Taking it further, a source-control tool at the top level, should also be able to understand “method rename” (and other refactorings), rather than a series of adds and deletes. Another example is “method reorder” where one method/function is moved below another. There are many others that are closer to our understanding of refactoring operations.

This matters for merging of course. Most of all “merge-through-rename”, but not only that. We want merges to be automatic and safe, and that’s more likely with smarter tools. Merge-through-rename was the high-bar for the 2010 article, and it was referent to three-way merges that understood carriage-return delimited text really well. With a team wanting to refactor with impunity, and have merges work across branches (or as you frequently update working-copy in your local checkout), then having the source-control tool understand what you did and allow auto-merge through conflict resolutions is key. At least it would move the bar for what a conflict is.

There is a sub-chapter of “programming” that is called Intentional. Charles Simonyi’s (friend of ThoughtWorks) Intentional Software solves some of the same problems with a non-text, non CR-delimited ‘source’ concept. I’m not talking about that tech.

Syndication Using Source-Control

This is substantially smarter sub-modules or externals, and mechanisms to get different implementations of Source-Control tools to collaborate. I mentioned at the end of January, about something that would be useful to allow local modifications to a sub-module to better allow syndication of versioned content, and maintained divergence. Sure it’s pretty much only me that’s asking for this, but I think it would be a logical next step for repos. Here’s that rambling article that uses uses CMS and content syndication as a justification. Refer to the permissions item below too.

This we forgot, or didn’t underline enough in 2010.

Permissions.

Perforce, TFS and Subversion have the ability to maintain separate read and write access for individual branches, or directories within branches. Git and Mercurial do not. If you have, say, database passwords in a production or release branch, and didn’t want developers to see them, then Perforce, Subversion and TFS are going to be stronger tools as you can restrict read-access to that branch. You also may not want developers to be able to change code in a branch that’s mapped to production, or is a staging release, but still allow them to have read access to the source. Debugging could be a reason for that. These tools allow you to restrict access to sub-directories too. This is hugely powerful, and perhaps I’ll drill into it in a follow up article.

GitHub has Forks (which do have separate permissions ), but it’s not the same thing as being able to be fine grained down to the branch/directory/resource level.

Distributed nature

Mercurial and Git (the two at level 5 as we had it), are the two that qualify as DVCS amongst the one we listed. While a corporate user of source control making a single deployable thing (say a shopping site) are going to consider one repo (and one branch within that) to be the canonical place that work is categorized as “definitely done” in terms of development at least, there are other some of source control that do not need a canonical server repo. Maintained divergence of code as seen by more than one ‘authority’ is one example. The source-control tools that must have master (like Perforce, TFS and Subversion) are much weaker for that usage scenario. The ‘local branching’ that Git/Mercurial offer is tremendously empowering, but some enterprises are going to feel better from having a single anointed master repository on some controlled-access server. Subversion as the definitive central repo, with git-svn doing the checkout is popular. There’s something similar with Perforce’s Git-Fusion too.

Rewriting history

Some of the ‘canonical server’ tools ensure that history is not re-written over the client API. Some of the DVCS tools don’t have a restriction in that regard. Sometimes The Sarbanes Oxley Act (SOX) is mentioned, often by non-lawyers like me. There’s a hypothesis that being able to rewrite (modify or delete) history works against SOX compliance. Mercurial has a mode of operation that prevents the rewriting of history, so I’m told, but I can’t find online info for that.

Source-Control tool ‘cruft’.

In the case of Subversion there was a .svn folder in every folder under SCM. That was neat in that it allowed you quickly copy that folder (and keep is’s source control linkage) to another place. It was cruft though. ASP.Net developers used to have a hack to have _svn folders instead of .svn folders to allow their tools to be compatible with Subversion. In recent releases of Subversion, this has been done away with. Actually, there’s just one .svn folder and it is at root now.

Also in the case of subversion (historically) there were “property-lists” that were associated with the source file. That’s useful for meta-data, but a previous choice was to use it for merge-point tracking. In use, for complex branch designs it was an incredible mess.

Partial tree checkout.

This very much relates to permissions above. Subversion, Perforce and TFS can checkout a subdirectory of any branch. Normally that means trunk, a tag or a branch:

$svn checkout http://svn.apache.org/repos/asf/spamassassin/trunk

# works

$svn checkout http://svn.apache.org/repos/asf/spamassassin/branches/3.0

# works

It does not stop there though, you can go down to a sub-directory, and checkout that directory and all lower, or even a single resource (Perforce, TFS). For Subversion single files are not allowed, as there would be nowhere to put the .svn folder.

$svn checkout http://svn.apache.org/repos/asf/spamassassin/trunk/build/

# works

$svn checkout http://svn.apache.org/repos/asf/spamassassin/trunk/META.yml

svn: E200007: URL 'http://svn.apache.org/repos/asf/spamassassin/trunk/META.yml' refers to a file, not a directory

# fails for Subversion

Secondly to variable degrees a client specification can be configured to create a mask of what’s wanted in a checkout. Perforce has client specs that allow such configuration (including locally renaming directories), TFS has Workspaces and working folder mappings & cloaking. Subversion can omit the checkout of subdirectories via sparse checkout which is also powerful, but a little different. It leaves you with the option of checking them out later. You’re not going to need anything more than the simplest default configuration 99% of the time, but sometimes you’ll be happy you had the choice for something more sophisticated.

Of course, a lot of things have changed in three years.

Plastic SCM comes on the scene as a force. It’s supposed to be quite competitive, but I’ve not had a chance to try it. It’s not even the only new-kid on the block. Let’s not even begin to talk about alternate source-control science around the likes of Darcs.

Microsoft’s Team Foundation Server (TFS) gets a lot better. Microsoft used ‘Source Depot’ (‘sd’ on the command line) internally from the late nineties onwards. It was a discrete recompilation of Perforce (‘p4’ on the command line) for them. TFS is Microsoft’s own attempt at the same idea. I’m not sure if it entirely the same though. Internally they flipped over from Source Depot somewhere between 2008 & 2009. Even today there are less menu options in the UI, than for Perforce. Perforce (the company) have a 12 page PDF comparison you may want to read if you care. Perforce (the tool) is foremost a command-line tool. UI tools for Perforce (P4V & P4MERGE) seem secondary. For TFS Visual Studio feels like the primary interface, and the command line feels secondary. There’s no doubt that TFS will keep pushing though. It is possible the intelligensia will never be happy with the amount of client-server I/O that can happen during “open for edit” (flipping the read-only bit) that dogs TFS and Perforce.

Subversion moves forward too. Better branching and merge-point tracking. The .svn folders that were sprayed all over the place are gone. A bug that cost a team I was on hundreds of hours of lost time was fixed.