Paul Hammant's Blog:

Decorators in the DOM

I previously wrote about decorators for web pages and whether they worked on a pull or push basis. That article was confined to decorators that worked on HTML markup in its textual form. These were implicitly server-side technologies. To recap, one technology that was talked about was the legendary Sitemesh (“site-mesh” - Siri pronounces this “sit-e-mesh”, sigh) component by Joe Walnes.

I’ve also recetly written about How Google makes a consistent top-navigation across multiple apps. That is in the same area of concern, though not exactly decoration. It turns out Google did site-experience stitching on the server side too.

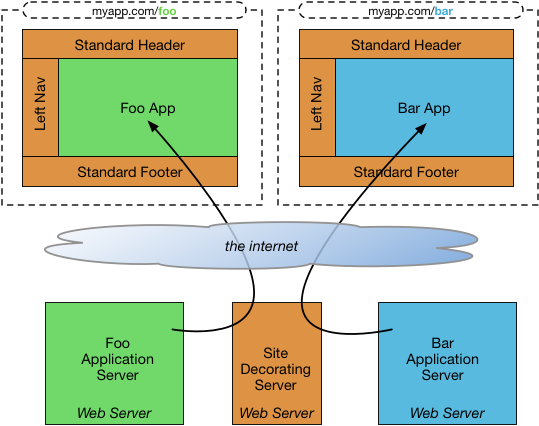

The classic way Sitemesh stitched a site experience

The Sitemesh servlet filter took whatever the intended app page was, and decorated it before sending it to the browser in a single piece. It also worked well for static content. The applications themselves were often in the same webserver, but I’m showing different web-servers that Sitemesh supported. It supported different technologies like Perl, or PHP.

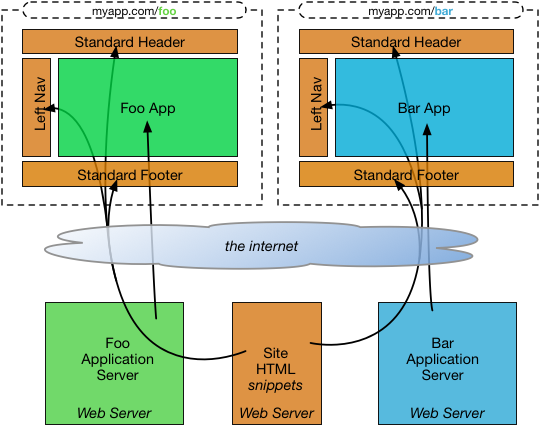

As Google did for a site experience for multiple apps?

Each app was its own web server, but snippets of site experience were injected into specific parts of the page. The research I on this did before was really only about the top nav, but the design is the same for multiple injectable parts of page. I’m showing above that the “site experience” as being pulled in via HTTP request a sideways web server, but I really don’t know for Google. It could have been a library function as easily meaning the technology was effectively linked in at build-time. Smart caching of those rectangles per-user on the server side makes it fast either way. Maybe, though, you’re not in the habit of serving up the main encompassing window too many times a day (per user).

Decorating in the DOM

These days we should consider decorators for such site experience on the client-side:

The app’s main page loads, and then pulls in as many further “site” rectangles as needed. The browser handles caching for the rectangles, so timeouts should be chosen carefully. This could be injecting into empty DIVs with the page. It could also be a design that leverages iframes.

For a decorator to work in the browser, it would have to be DOM savvy. Here is the hypothesis for how that would work:

- A page is retrieved by the browser as normal (GET)

- It is determined that site “decoration” is needed, and then applied.

- Repeat #2 as until there are no more decorators to apply

- Present page to the end user - phew!

What’s not possible is that you can block the first showing of the page before it gets decorated. Or maybe you can block it, but that’s not going to be easy or smooth. There’s certainly no W3C way of paticicipating in the page load-cycle that way. Yet, at least. In lieu of that, frameworks like AngularJS use a cloaking technique to get there.

What about Sitemesh.js?

In 2001, Joe Walnes (he figures a lot in this blog entry) worked at a company using Microsoft’s old ASP technology, and wanted to do some Sitemesh style separations. The technologies at the time did not support it, so Joe tried to do it in JavaScript. Here is what he recalls (and I’m copy/pasting from his email to me, and this isn’t exactly working code):

Samplepage·html:

<html>

<body>

<script src="sitemesh.js"></script>

some vanilla html here

</body>

</html>

decorator·html:

<html>

<body>

... all your common stuff ...

<div id="sitemesh-body"></div>

... more stuff

</body>

</html>

Sitemesh·js:

onload = function() {

// grab the content of the current (undecorated page)

var content = document.body.innerHTML;

// create hidden <iframe src='decorator.html'>

var iframe = doucment.createElement('iframe')

iframe.style.display = 'none' // hide

iframe.src = 'decorator.html'; // load decorator in iframe

document.body.appendChild(iframe);

// copy vanilla contents from current page into sitemesh decorator placeholder

var sitemeshPlaceholder = iframe.content.document.getElementById('sitemesh-body');

sitemeshPlaceholder.innerHTML = content;

// finally, replace all the contents of this page, with

// the contents of the merged content in the iframe

// (this also ends up deleting the iframe)

document.outerHTML = iframe.content.document.outerHTML;

}

Tired from the trip down memory lane, Joe ends up: “I’m sure there were lots of little quirks to work out, but that’s the basic gist”

This isn’t perfect, and experience DOM developers would point out the age old love/hate relationship with iframes at the center of that imperfection. It’s not going to feel quite right in use, because of focus issues. Also the inner iframe and the outer non-iframe don’t share the same URLs. More on that later.

What would Joe do today?

Maybe it is still an client-side iframes solution, he says. One with a rigid set of user interface standards. I quote from another of Joe’s emails were he outlines an iframe usage for this that is in production and solid similar in principal to the above:

Pros & Cons

Pros:

- Easy to compose pages: the integration point is a url (each can be run from a different server)

- Strong compartmentalization: css, js functions, etc cannot leak out

- Easy to develop iframe contents in isolation (without the container page)

- Can use window.postMessage() to build message based communication between frames

- Allows complete choice of tech stack within frame

Cons:

- Assembling a page this way can look like a hodgepodge mess: you need strong user interface guidelines and in many cases you may choose to share css of tech stack dependent widgets

- Some things can be awkward. Eg if an iframe has focus it will take the keyboard events from the main page. And a global onload handler is hard. I recommend sharing a common js lib across all frames to help coordinate things

His thoughts on other solutions:

-

Sitemesh only really appropriate for static server rendered sites. I use it for content centric sites but not app centric things

-

The official web components spec may be the future of this but at the moment i find it still very clunky and i’ve been bitten a few times by the spec changing

-

Alternatively adopt a Javascript framework and standardize with clear packages and boundaries to prevent chance of monolith. Problem with this is you’ll be stuck with it for life.

In closing

(me again - not quoting Joe)

Client side decorating, of that site experience, isn’t going to please the Google search crawler or their search-preview bot.

You have to really worry about latency and brittleness, if you’re going in that direction. Blocking/cloaking needs to be implemented, at least until it becomes W3C standard, unless your whole production setup is so fast nobody notices. If your community is logged in users, rather that reddit-clicking guests, then maybe they are more forgiving about the experience, and more likely to avail of the cached elements too.

Then there are the focus and URL issues that are bigger or smaller based on which of the client-side solutions you choose.

Comments formerly in Disqus, but exported and mounted statically ...

| Wed, 23 Sep 2015 | Graham Hay |

sounds a bit like Udi Dahan's "vision" for UI composition - http://udidahan.com/2012/06... | |