Paul Hammant's Blog:

Content Syndication using Source Control

Content Syndication Today is Rudimentary.

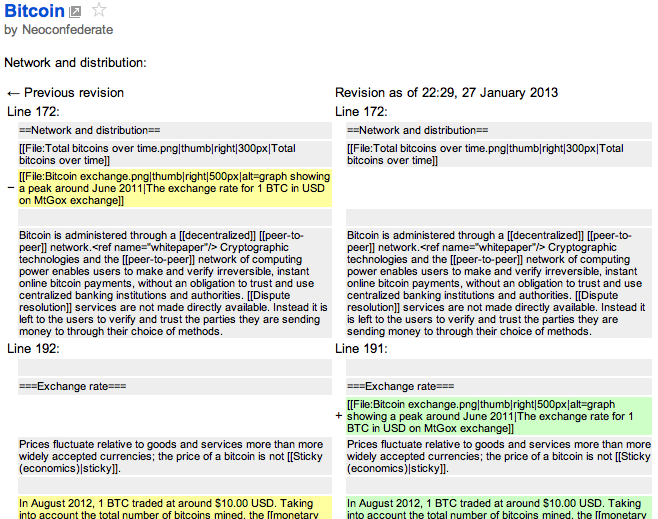

Sure we have RSS (and all it’s twists and turns since 1999), and Atom since 2003. They are effective mechanism to subscribe to new content from a site. RSS and Atom either communicate all of an article/posting, or an excerpt. Wikipedia has an Atom feed for new pages, changes etc, that kinda shows how deep you can go (Google Reader screen shot):

The payload of that Atom is a HTML table inside the the content element. That is really too deep though. Atom and RSS are not designed for diffing.

Better would be some RESTful url to an source control API for the changed/new/deleted item.

A contrived problem statement

“What have the Romans ever done for us”

OK, so consider two political pressure groups, courtesy of Britain’s #1 comedy movie - The Life of Brian, The People’s Front of Judea, and The Judean People’s Front. PFoJ and JPF respectively. Being concerned with the needs to curate their own content over thousands of years, both obviously back their web sites with source-control tool and consider a simple article source format (Markdown) to be valuable. They really don’t co-operate much (a joke in the sketch), but what if they agreed on a single topic - What have the Romans ever done for us?. The PFoJ could say write a article citing the advances made by Roman civilization (sanitation, medicine, education, wine, public order, irrigation, roads, a fresh water system, public health and peace), and publish it through their website. Say the article source were in Markdown. How could the JPF syndicate that single page of content from amongst the tens of thousands that they are not interested in? Cut and Paste would be one way, but how do they track changes to the article going forward? RSS or Atom - sure that notes than change has happened (if the publisher uses it in that way), but almost nobody pulls followup article modification from the notification system that they are. We’ll come back to this, but remember it’s one article from tens of thousands of articles that we are interested in.

What I think is needed

Consider a directory tree held under source-control. For part of that tree, I want to refer to part of another part tree of another source-control repo/branch, and base what’s stored locally on the HEAD revision of node(s) affected. The ‘part’ is a subdirectory, or an individual resource. For the resources that are canonical in the ‘other’ repository, I may want to locally modify them. The resources from the other repo, might be subject to license. I’m guessing that would be at least “Credit Us” and “Use our Google-Ads”.

The PFoJ, who use Jekyll, have their Romans.markdown source like so:

---

layout: article

title: "What have the Romans ever done for us?"

license: /licenses/Credit_Ads_Counts.html

permalink: "/romans.html"

adsense_id: 336h4hd6s554

---

What have the Romans ever done for us?

======================================

Well, apart from the sanitation, medicine, education, wine, public order, irrigation, jet engines, roads, the fresh water system and public health

...

Note the Front-Matter header, before the actual text. The JPF also use Jekyll, and modify the article like so:

---d

layout: reference

title: "What have the Romans ever done for us?"

license: /terms/dont_syndicate.htm

permalink: "/what_have_the_romans_ever_done_for_us"

adsense_id: 336h4hd6s554

credit: http://pfoj.org/romans.html

---

What have the Romans ever done for us?

======================================

Well, apart from the sanitation, medicine, education, wine, public order, irrigation, jet engines, roads, the fresh water system and public health

...

Behind the scenes, as well as that local modification, the source-control system should store the revision/digest of the canonical repo. It should be possible to auto-merge modification from the canonical repo, defer them or ignore them. Defer and ignore are essentially the same thing. In the case of the merge being too complicated for an automatic resolution, then the normal source-control three-way merge will be required.

In terms of our contrived case: When the PFoJ get round to fixing their error (‘jet engines’ should be removed) and commit, the JPF should be able to process that change using source-control tools. That’s the point - far superior to notification of the same thing via RSS or Atom.

Standards?

WebDAV was created as a standard for Digital Authoring and Versioning. Subversion implements it (Greg Stein of the Subversion developers helped define WebDAV). While there are other reasons to use WebDAV, I don’t care for standards ahead of competing implementations to refine the ideas. Note above, for the “romans” articles, I only care to show the effect on the content and implicit tracking (not how it’s implemented).

What about today’s source-control tools?

Both Git ‘Submodules’ and Subversion ‘Externals’ allow a composite design with a main repo, and subordinate ones participating. Neither allows local modifications. Both insist that any changes go back to the repo from which the subordinate was cloned/checked-out. In other words, neither allows for local modifications to be retained.

In the case of the Git Submodules, it is always a reference to a whole branch/repo - not any subdirectory or an individual resource within. Subversion can at least point to a sub-directory of the repo.

Secondly, Git has all repositories created equally, and the notion of a ‘canonical one’ when all have the same/similar content and history (via clone), is simply down to human agreement on the location of the canonical one. With our syndication idea, there is a canonical upstream repo though. With normal Git, (no submodules) it is not complicated, the PFoJ could merge back the fix to the article they’re interested in even with the Git model. That only works for a clone/fork of all articles though (which in this scenario is costly).

So what I really want is exactly like Git, except:

- sub-directories or individual resources (as a choice) rather than whole repositories only

- local modification allowed (Maintained divergence)

Or what I want is exactly like Subversion, except:

- local modification allowed (Maintained divergence)

- doesn’t automatically update subordinates (command-line switch “-ignore-externals” by default)

Real World Uses

News media/publications (newspapers etc) already have channels for syndication of articles that they are quite happy with - Associated Press and alike. AP even covers charging as well as content. One thing is characteristic of the publishing of newspapers in particular though, is that the articles are highly transient. They are typically not revisited. That’s related to old print-only days, and process of drafting, editing towards deadlines, then shifting priorities to the next edition. Although it is changing for old-media, there is little interest in merging updates from upstream articles they may have syndicated.

More likely are users of this are groups and organizations with an medium/long-term agenda and a message that is being refined. Consider two (that don’t exist): “Against Software Patents” and “Limiting Software Patents”. Both want to make a cohesive portal for their effort. Both would be a mix of content/articles, events, and style. They’d share a lot of positions on the worth of Software Patents to the economy. They might differ only on the fact that the latter is willing to agree that Software Patents could exist but for a limit of, say, five years before final expiry. On the campaign against Software Patents, I missed a portal previously - “End Software Patents” has endsoftpatents.org which is a Wordpress site, and en.swpat.org/wiki/Software_patents_wiki:_home_page which is MediaWiki (yes that’s the home page). This one is ripe for GitHub Pages and the Jekyll toolchain I prefer, even as is and without the fancy source-control tools enhancements I’m outlining here. That Ciarán O’Riordan spends a lot of time updating it.

Similar positions could be held by the QuestionCopyright.org (who exist), AbolishCopyright.com (gone stale), and ==&qt;== Rollback the Disney Act ==&qt;== (does not exist). They have common ground, and could benefit from each other’s activities. QuestionCopyright.org does not have a huge agenda, it are more about education, and uses Drupal today. Again - ripe for the Jekyll toolchain I prefer (and all that pull-request goodness).

Published

Syndicated by DZone.com

Reads: